AI IDEs or Autonomous Agents? Measuring the Impact of Coding Agents on Software Development

Speed at the Cost of Quality: How Cursor AI Increases Short-Term Velocity and Long-Term Complexity in Open-Source Projects

Overview of Research

AI coding assistants and autonomous coding agents are widely promoted as major accelerators of software development. Developer testimonials and product marketing often report large productivity gains, but rigorous longitudinal evidence has been limited. In two recent empirical studies, we examine these claims using large-scale GitHub data and modern causal inference methods.

Both papers use the same core methodology: staggered difference-in-differences designs with carefully matched control repositories, combined with repository-level quality metrics from static analysis. One study focuses on adoption of an AI-native IDE assistant, Cursor, while the other examines autonomous coding agents that generate pull requests and contribute code at the repository level.

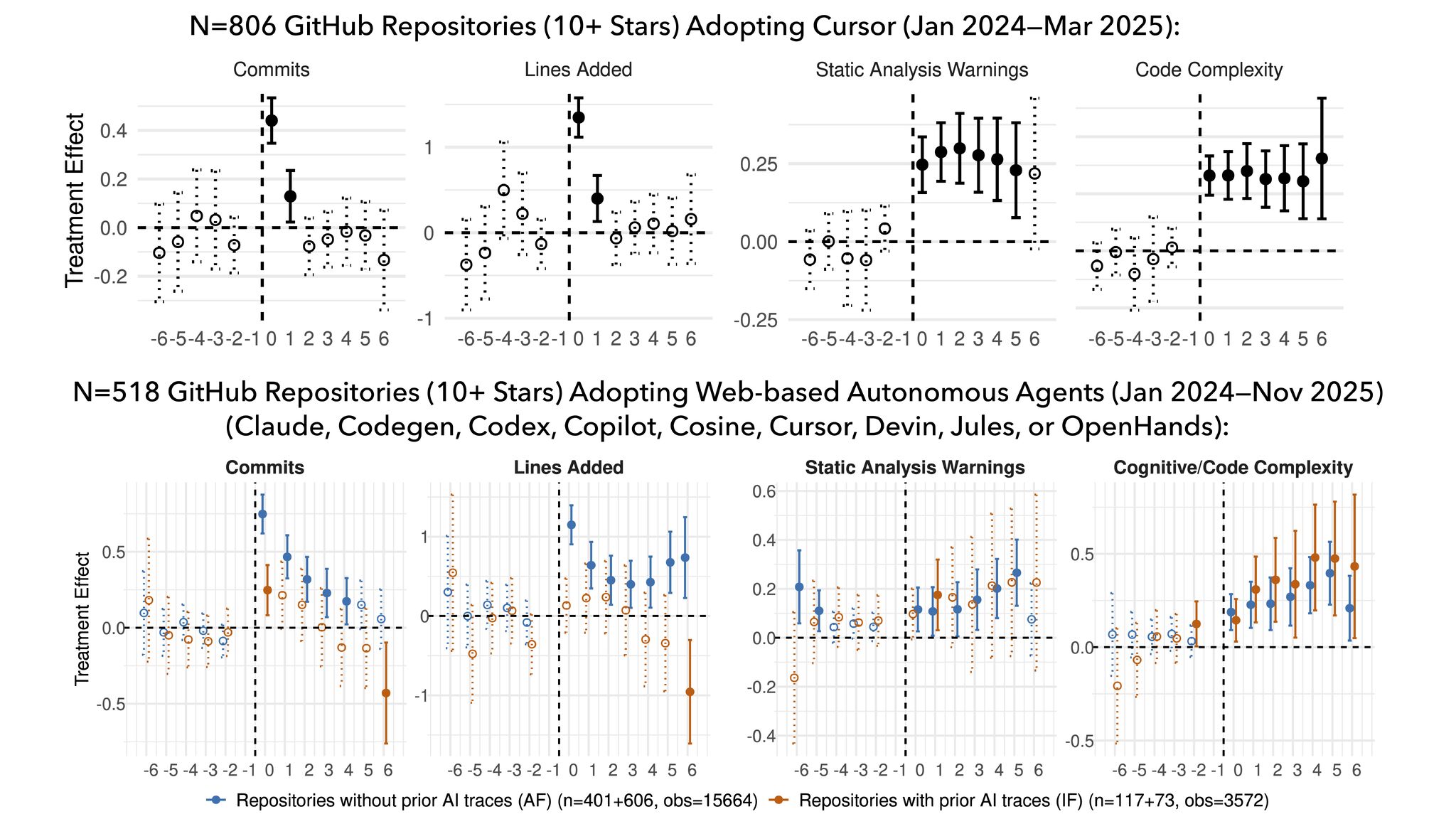

Across both settings, we find strong and statistically significant short-term increases in development velocity after adoption, measured through commits and lines of code added. In the Cursor study, velocity spikes are large but transient, with lines added increasing several-fold in the first month and then tapering off.

Impact of AI coding tools on software development metrics over time. The top row shows Cursor AI adoption, while the bottom row shows broader autonomous agent adoption. Blue lines represent projects without prior AI tool usage, orange lines show projects that previously used AI IDEs.

What is less visible from anecdotal reports, but consistent across both papers, is the quality side of the story. In both the IDE assistant and agent settings, adoption is followed by persistent increases in static analysis warnings and code complexity, two widely used proxies for technical debt and maintainability risk. These increases do not revert during the observation window.

The agent study further shows heterogeneous effects depending on prior AI exposure. Repositories that adopt agents as their first AI tool see sizable velocity gains, while those already using AI IDE tools experience much smaller or short-lived throughput improvements. Quality degradation signals, however, appear in both groups, with static analysis warnings and cognitive complexity rising on the order of roughly twenty to forty percent on average.

Together, the two studies point to a consistent pattern: AI coding tools accelerate output in the short run, but without stronger quality controls and review practices, they are associated with sustained increases in code complexity and technical debt, which in turn are linked to later slowdowns in velocity.